One of the most talked about tech milestones announced in 2017 was the introduction of CoreML at the Apple Worldwide Developers Conference (WWDC). This has raised much hype – especially for software developers, as it represents a new door of opportunities for use on Apple products.

In short, CoreML is Apple’s newest machine learning toolkit/framework for developers. (Updated: see CoreML2 here ). With its status, it’s compatible for use on any Apple device, from iphones, to ipads, to Apple Watch. We have previously broken down what machine learning is in another article, but let’s do a quick recap here.

A subcategory of artificial intelligence, machine learning is where computers does its own learning from a huge source of data without being explicitly programmed to do so. It finds patterns through existing data, build and refine algorithms through layering of multiple neural networks, and eventually apply the algorithm to new sets of data. There are two further subcategories of machine learning – supervised and unsupervised, both of which are used for different purposes.

With CoreML, it means that Apple devices now come with a machine learning framework that can optimise already trained data for use on apps in an efficient and secure way. Though CoreML is not able to train new sets of data, developers can use pre-trained models in apps as long as it is converted back to a CoreML accepted model.

Apple’s Core ML framework currently support deep neural networks, tree ensembles, support vector machines, generalized linear models, feature engineering and pipeline models. The above can each be leveraged to create countless of unique app features, allowing for much creative freedom than before.

For a mobile app enabler also specializing in IoT and AI technologies, the deep learning supportive capabilities of CoreML will allow us to employ our video analytics expertise. This means that we are able to further integrate our deep learning models especially on face recognition.

Developing mobile apps with face recognition can allow for a more efficient payment process for e-commerce businesses, and even for loyalty platforms as a personalisation tool.

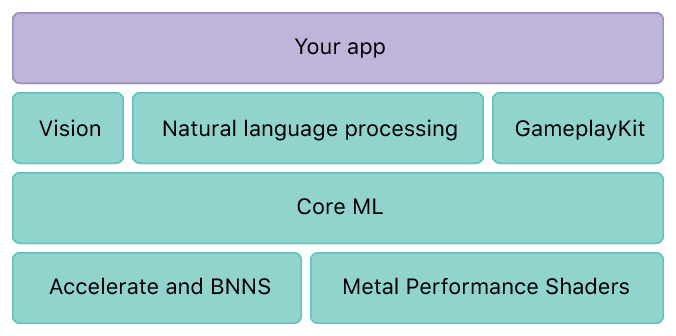

CoreML is definitely a step-up from previous ML framework released by Apple such as Accelerate/Basic Neural Network Subroutines (BNNS) for Central Processing Units (CPU), and Metal Performance Shaders CNN (MPSCNN) for Graphic Processing Units(GPU). Due to its separate functions, it was more time-consuming and complex for developers to integrate. CoreML makes life easier by combining these two and differentiates by itself when to use CPU and when to use GPU.

Frameworks that CoreML include:

- Vision: Provides detection and analysis for objects and images. Essentially computer vision.

- Foundation: Provides functionality natural language processing (text)

- Gameplay Kit: Provides for game development (logic and behaviour analysis)

Img Src: Apple Developer

So enough of developer talk. What does CoreML mean for you as a user?

The main benefit this brings for users is its best feature of on-device performance. Running the algorithms and analysis on the device itself reduces power consumption, memory space, and protects user data. What does this mean? Users do not need to worry about privacy leak of their data as machine learning through CoreML is done on the device itself, and does not need to be transmitted to an external server/cloud to be done.

This also means that if mobile apps are built with CoreML functionality, users are able to use the apps even with no access to the internet (i.e. offline). Eventually, this will result in less loading time for the user and can be key when building apps for security and communication purposes. Core ML offers quick and efficient machine learning tools to take full advantage of the GPU and CPU on IOS devices, enabling machine learning on-device instead of needing access to the internet for each request, speeding up the process.

Nonetheless, as with every piece of new technology, there are downsides. The use of CoreML is still pretty limited, as it is unable to do training on-device, and currently only support regression and classification features.

However, as mentioned above, one of the most powerful uses of CoreML that can be immediately harnessed is face/image recognition. Some examples helpful for e-commerce are: user detection, credit card identification, location recognition, direct/indirect mapping for recommendation systems and more.

Supported by layers upon layers of neural networks, machine learning, and with it- deep learning, will be indispensable tools towards the future.

If you already have an existing software that you would like to integrate machine learning functions with, let’s get in touch and see how we can help. If you would like to create a mobile application or piece of software with the models mentioned in this article, we are more than happy to get involved as well.

Simply drop us an email at insight@motherapp.com !

Follow us on Social Media!